Era of Ubiquitous Machine Learning

Machine learning is no longer the sole preserve of data scientists. The ability to apply machine learning to vast amounts of data is greatly increasing its importance and wider adoption. In 2017, huge increase is expected to bring availability of machine learning capabilities into tools for both business analysts and end users—impacting how both corporations and governments conduct their business. Moreover, Machine learning will affect user interaction with everything from insurance and domestic energy to healthcare and parking meters.

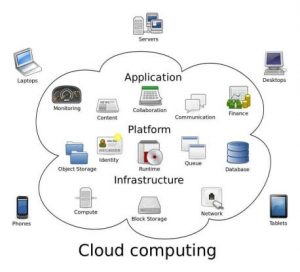

Emergence of Cloud to the data

It's not always possible to move data to an external data center. Privacy issues, regulations, and data sovereignty concerns often preclude such actions. Sometimes, the volume of data is so great that the network cost of relocating it would exceed any potential benefits. In such instances, the answer is to bring the cloud to the data. In the future, more and more organizations will need to develop cloud strategies for handling data in multiple locations.

Applications are driving big data adoption

Early use cases for big data technologies focused primarily on IT cost savings and analytic solution patterns. Now, we're seeing a wide variety of industry-specific, business-driven needs empowering a new generation of applications dependent on big data

Internet of Things will integrate with enterprise applications

The Internet of Things is for more than inanimate objects. Everything from providing a higher level of healthcare for patients to enhancing customer experience via mobile applications requires monitoring and acting upon the data that people generate through the devices they interact with. The enterprise must simplify IoT application development and quickly integrate this data with business applications. The impact will be felt not only in the business world, but also in the exponential growth of smart city and smart nation projects across the globe.

Data virtualization becomes a reality

Data silos proliferate in the enterprise on platforms like Hadoop, Spark, and NoSQL databases in 2017. Potentially valuable data stays dark because it's hard to access (and also hard to find). Organizations in 2017 will realize that it's not feasible to move everything into a single repository for unified access, and that a different approach is required. Data virtualization is emerging as a means to enable real-time big data analytics without the need for data movement.

Kafka to be the runaway for big data technology

Apache's Kafka technology is already building momentum, and looks set to hit peak growth in 2017. Kafka employs a traditional, well proven bus-style architecture pattern, but with very large data sets and a wide variety of data structures. This makes it ideal for bringing data into your data lake and providing subscriber access to any events your consumers ought to know about

A boom in pre-packaged integrated cloud data systems-Increasingly, organizations are seeing the value in data labs for experimenting with big data and driving innovation. But uptake has been slow. It isn't easy to build a data lab from scratch—whether on premises or in the cloud. Pre-packaged offerings including integrated cloud services such as analytics, data science, data wrangling, and data integration are removing the complexity of do-it-yourself solutions. In 2017, a boom in pre-packaged, integrated cloud data labs is expected to come.

Cloud-based object stores become a viable alternative to Hadoop HDFS

Object stores have many desirable attributes: availability, replication (across drives, racks, domains, and data centers), DR, and backup. They're the cheapest, simplest places to store large volumes of data, and can directly accommodate frameworks like Spark. We see object storage technologies becoming a repository for big data as they get more and more integrated with big data computing technologies and will provide a viable alternative to HDFS stores for a lot of use cases in 2017. All exist as part of the same data-tiering architecture.

Next-generation compute architectures enable deep learning at cloud scale

The removal of virtualization layers. Acceleration technologies, such as GPUs and NVMe. Optimal placement of storage and compute. High-capacity, nonblocking networking. None of these things is new, but the convergence of all of them is. Together, they enable cloud architectures that realize order of magnitude improvements in compute, I/O, and network performance. In the year 2017, Deep learning at scale, and easy integration with existing business applications and processes will exist.

Hadoop security is no longer optional

Hadoop deployments and use cases are no longer predominantly experimental. Increasingly, they're business-critical to organizations like yours. As such, Hadoop security is nonoptional. In 2017, It is expected to deploy multilevel security solutions for your big data projects in the future.